You've shipped an AI feature. It works great in your demo. Then a user asks something slightly off-script and the whole thing falls apart. Sound familiar?

Testing AI isn't like testing a button click or a database query. The outputs are non-deterministic, context-dependent, and sometimes just vibes. But that doesn't mean you can't test them well, you just need the right approach for the right situation.

Let's break down the main approaches to AI testing, when each one shines, and how to actually implement them.

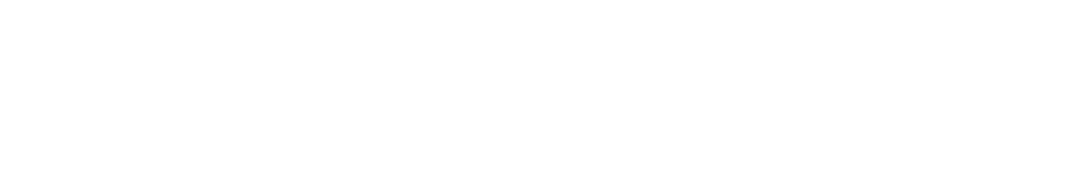

Unit Testing Prompts

What it is: Testing individual prompt templates and their outputs against expected patterns or structures.

When to use it: When you have well-defined prompts that should return predictable structures, think classification tasks, structured data extraction, or routing decisions.

Why it works: It catches regressions early. If you change a prompt and suddenly your classifier stops returning valid categories, you'll know before it hits production.

import { generateText } from 'ai';

import { openai } from '@ai-sdk/openai';

import { describe, it, expect } from 'vitest';

describe('categorise support ticket', () => {

it('should classify a billing query correctly', async () => {

const { text } = await generateText({

model: openai('gpt-4o-mini'),

prompt: `Classify this support ticket into one of: billing, technical, general.

Respond with only the category.

Ticket: "I was charged twice for my subscription"`,

});

expect(text.trim().toLowerCase()).toBe('billing');

});

});

Keep these tests focused on structure and classification rather than exact wording. You're testing that the model understands intent, not that it writes the same sentence twice.

Evaluation-Driven Testing (Evals)

What it is: Running your AI against a curated dataset of inputs and scoring the outputs, either with another LLM or with heuristic checks.

When to use it: When you're iterating on prompts or comparing models and need a repeatable quality benchmark. This is the backbone of serious AI development.

Why it works: It gives you a score you can track over time. Prompt change improved your eval score from 72% to 85%? Ship it. Dropped to 60%? Revert.

import { generateText } from 'ai';

import { openai } from '@ai-sdk/openai';

const testCases = [

{ input: 'What are your opening hours?', expectedTopic: 'hours' },

{ input: 'I need to reset my password', expectedTopic: 'account' },

{ input: 'Do you ship to Australia?', expectedTopic: 'shipping' },

];

async function runEval() {

let passed = 0;

for (const { input, expectedTopic } of testCases) {

const { text } = await generateText({

model: openai('gpt-4o-mini'),

prompt: `Classify the topic of this message. Respond with one word.\nMessage: "${input}"`,

});

if (text.trim().toLowerCase() === expectedTopic) passed++;

}

console.log(`Eval score: ${passed}/${testCases.length}`);

}

The real power here is building up your eval dataset over time. Every bug report, every weird edge case, add it to the set. Your evals get stronger as your product matures.

LLM-as-Judge

What it is: Using a second LLM to evaluate the quality of your primary model's output. Instead of checking for exact matches, you ask a model whether the response was helpful, accurate, or on-topic.

When to use it: When outputs are open-ended and there's no single "correct" answer, think summaries, explanations, or conversational replies.

Why it works: Human evaluation doesn't scale, and regex can't judge nuance. An LLM-as-judge gives you a middle ground: reasonably good quality assessment that runs automatically.

import { generateText } from 'ai';

import { openai } from '@ai-sdk/openai';

async function judgeResponse(question, response) {

const { text } = await generateText({

model: openai('gpt-4o'),

prompt: `You are evaluating an AI assistant's response.

Question: "${question}"

Response: "${response}"

Rate the response from 1-5 on:

- Relevance (does it answer the question?)

- Accuracy (is the information correct?)

- Clarity (is it easy to understand?)

Respond as JSON: { "relevance": n, "accuracy": n, "clarity": n }`,

});

return JSON.parse(text);

}

A word of caution: LLM-as-judge has its own biases. It tends to prefer longer responses and can be overly generous. Use it as a signal, not as gospel.

There's a lot more to LLM-as-judge than what's covered here, including rubric design, bias mitigation, and production monitoring patterns. I wrote a full deep dive on this: LLM-as-Judge: A Practical Guide to Evaluating AI Outputs at Scale.

Integration Testing with Mocks

What it is: Testing your AI pipeline end-to-end but swapping the actual model call for a deterministic mock. This lets you test the logic around your AI, parsing, error handling, tool calling, without the cost and flakiness of real API calls.

When to use it: In CI/CD pipelines where you need fast, reliable, and free tests. Also great for testing error paths like rate limits or malformed responses.

Why it works: Your AI feature is more than just the model call. There's routing, validation, response parsing, fallback logic, all of which can be tested deterministically.

import { describe, it, expect, vi } from 'vitest';

// Mock the AI SDK

vi.mock('ai', () => ({

generateText: vi.fn().mockResolvedValue({

text: '{"category": "billing", "priority": "high"}',

}),

}));

describe('ticket processor', () => {

it('should parse and route a classified ticket', async () => {

const result = await processTicket('I was charged twice');

expect(result.category).toBe('billing');

expect(result.priority).toBe('high');

expect(result.routed).toBe(true);

});

});

This is where most of your test coverage should live. Mocks are fast, cheap, and catch the bugs that actually break your app, the ones in your code, not the model's.

Snapshot Testing

What it is: Capturing a model's response at a point in time and comparing future responses against it. Not looking for exact matches, but flagging significant drift.

When to use it: When you want to detect unexpected changes after a model upgrade, prompt tweak, or dependency update.

Why it works: AI outputs shift over time, models get updated, prompts evolve. Snapshot tests act as a tripwire that tells you something changed, even if you didn't expect it to.

These work well as a manual review step. Run them periodically, eyeball the diffs, and update snapshots when the new output is genuinely better.

Which Approach Should You Use?

All of them, but not equally. Here's a rough guide:

| Approach | Best For | Run In CI? | Cost |

|---|---|---|---|

| Unit Testing Prompts | Classification, structured output | Sparingly | $$ |

| Evals | Quality tracking over time | Nightly / pre-release | $$ |

| LLM-as-Judge | Open-ended response quality | Nightly | $$$ |

| Integration Mocks | Pipeline logic, error handling | Every commit | Free |

| Snapshot Testing | Drift detection | Periodic | $$ |

Start with integration mocks, they're free, fast, and catch the most common bugs. Add evals as your prompt library grows. Bring in LLM-as-judge when your outputs get complex enough that heuristics can't keep up.

The goal isn't 100% coverage. It's confidence that your AI feature does what you expect, and that you'll know quickly when it doesn't.